Evaluating Models Notes – Class 10 AI (417)

Here is Class 10 AI Evaluating Model Notes strictly desinged as per Latest CBSE Curriculum. All topics are written keeping Board Exam in focus.

What is Evaluation?

Model Evaluation is the process of checking how well a machine learning model performs using different evaluation metrics

Model Evaluation = Report Card of AI Model

Process of Evaluation

Build the model → Measure performance using metrics → Improve the model → Repeat until good accuracy is achieved

Importance of Model Evaluation

- Uses different evaluation metrics.

- Gives feedback to improve the model

- Helps identify: Strengths and weaknesses

- Makes AI system more reliable and trustworthy

Evaluation techniques

Train-Test Split

✅ What is Train-Test Split?

- The train-test split is a technique for evaluating the performance of a machine learning algorithm

- It can be used for any supervised learning algorithm

- In this dataset is divided into:

- Training Data → Model learns from this

- Testing Data → Model is tested on this

- The train-test procedure is appropriate when there is a sufficiently large dataset available

✅ Need of Train-test split

- To check how well the model performs on new unseen data.

- To ensure the model is learning patterns, not memorizing data.

- To get a realistic estimate of model performance.

- To prevent overfitting (model performing well only on training data).

- To simulate real-world use where predictions are made on unknown data.

Overfitting is a situation where a model is evaluated on the same training data, leading to very high accuracy because it memorizes the data, but performs poorly on new unseen data.

Accuracy and Error

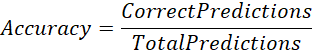

Accuracy

- Accuracy is an evaluation metric that allows you to measure the total number of predictions a model gets right.

- The accuracy of a model is directly proportional to its performance; better performance leads to more accurate predictions.

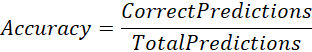

- Formula:

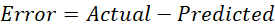

Error

- Error refers to the difference between a model’s prediction and the actual outcome. It quantifies how often the model makes mistakes.

- Error is used to see how accurately our model can predict data it uses to learn new, unseen data.

- Based on error, the machine learning model that performs best for a particular dataset is selected.

- Formula:

Calculate Accuracy/Error of the AI Model

An AI Model predicted 800 emails as spam, but the actual spam mails was 900. Calculate the model’s accuracy.

| Predicted spam emails | Actual spam emails | Error (actual predicted) | Error rate (Error/Actual) | Accuracy (1-Error rate) | Accuracy % |

| 800 | 900 | 900-800 = 100 | 100/900 = 0.11 | 1-0.11 = 0.89 | 0.89*100=89% |

We can also calculate accuracy as following:

= 89%

Evaluation Metrics for Classification

What is Classification?

- Classification usually refers to a problem where a specific type of class label is the result to be predicted from the given input field of data.

- Example: Fruits vs Grocery, Disease: Yes / No , Pass / Fail

Classification Metrics

- Confusion Matrix

- Accuracy

- Precision

- Recall

- F1 Score

Confusion Metrix

- A confusion matrix summarizes a model’s result, showing correct and incorrect predictions in detail.

- It’s a table used to evaluate classification model.

- A confusion matrix is handy for evaluating a model with two or more classes

Confusion Metrix Table

A table that shows actual vs predicted values.

| Predicted Positive 1 | Predicted Negative 0 | |

| Actual Positive 1 | TP | FN |

| Actual Negative 0 | FP | TN |

- X-axis shows predicted values

- Y-axis shows actual values

- TP → True Positive → Both Actual & Prediction True

- TN → True Negative → Both Actual & Prediction False

- FP → False Positive → Actual False but Prediction True

- FN → False Negative → Actual True but Prediction False

- 1 → True

- 2 → False

- Numbers in each cell represents how many predictions fall into that category

Understanding True/False Positives and Negatives

| Term | What it Means | Example (Disease) |

| True Positive | Model correctly predicts Positive | Predicted → “Yes, they have disease” Actual → They do have the disease |

| True Negative | Model correctly predicts Negative | Predicted → “No, they don’t have disease” Actual → They don’t have the disease |

| False Positive | Model wrongly predicts Positive | Predicted → “Yes, they have disease” Actual → They don’t have the disease |

| False Negative | Model wrongly predicts Negative | Predicted → “No, they don’t have disease” Actual → They have the disease |

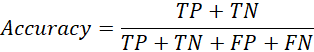

Accuracy from Confusion Matrix

- accuracy is the number of correct predictions made as a ratio of all predictions made.

- Total Predictions =

- Classification Accuracy =

=

Accuracy works well only when:

- Dataset is balanced

- all predictions and prediction errors are equally important

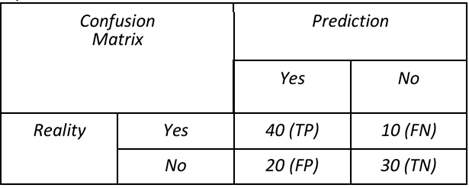

Construct Confusion Metrix – Calculate Accuracy of Classification model

A school recently tested an AI model designed to predict whether students would pass or fail their final exams. Out of 100 students, the model correctly predicted that 40 students would pass and they actually did. It also correctly identified 30 students who were going to fail. However, the model predicted that 20 students would pass, but they ended up failing. Additionally, it predicted that 10 students would fail, but they actually passed.

a) Draw the confusion matrix based on the above information.

b) Calculate the accuracy of this classification model. Show your working.

c) Write the total number of wrong predictions made by the model.

a) Drawing confusion Metrix

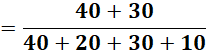

b) Calculate accuracy of classification model

Classification Accuracy =

=

Accuracy% = 70%

c) Wrong predictions = FP + FN = 20+10 = 30

Unbalanced Dataset Problem

- 1000 students in which 900 Pass and 100 Fail

- Model predicts everyone as “Pass”

- Accuracy = 90%. But model is useless! So accuracy alone is misleading.

Precision from confusion metrix

- Precision is the ratio of correctly predicted positive cases to the total predicted positive cases.

-

Out of predicted positives, how many were correct?

Precision: When we should it?

- For unbalanced datasets

- Important when dealing with False Positives (FP) is important

- Focus is to reduce wrong positive predictions

- Ensures predicted positives are mostly correct

Recall (Sensitivity)

- Recall is the measure of our model correctly identifying True Positives.

Recall: Where should we use it?

- Recall is generally used for unbalanced dataset when dealing with the False Negatives become important.

- The model needs to reduce the FNs as much as possible.

- Example:

- Disease detection

- COVID detection

- Fraud detection

F1 Score

- F1 Score combines both precision and recall into a single metric that reflects both properties.

- It is useful for unbalanced datasets, especially when it is unclear whether reducing False Positives (FP) or False Negatives (FN) is more important.

Use F1 Score when:

- Dataset is unbalanced

- Unsure whether FP or FN is more important

Example: Fraud Detection

- False Negative = Fraud predicted as Normal

- Very dangerous, So Recall should be high.

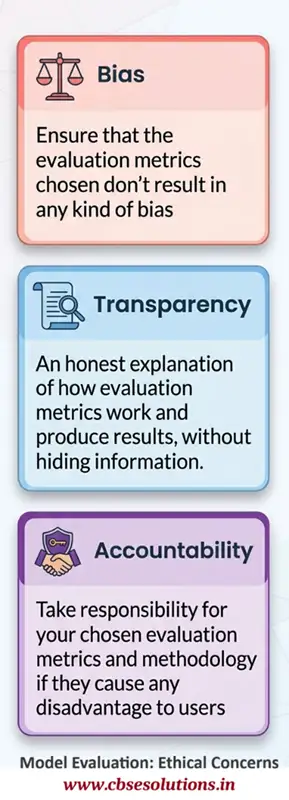

Ethical Concerns in Model Evaluation