Making Machine See Notes – Class 12 AI (843) | Unit-3 Computer Vision

Master every concept from Unit-3 Making Machines See with these Class 12 AI notes. We’ve simplified Computer Vision as per the latest CBSE curriculum into clear, easy-to-understand points designed specifically for your board exams. Simple explanations, focused content—everything you need to score full marks.

Computer Vision is a branch of Artificial Intelligence (AI) that uses sensing devices and deep learning techniques to enable machines to understand and interpret visual information.

What is Computer Vision?

- It is similar to human vision, using cameras, data, and algorithms like the human retina, optic nerves, and visual cortex.

- CV extracts meaningful information from images, videos, and other visual inputs.

- Computer Vision systems are used to inspect products, monitor infrastructure, and analyze production processes in real-time.

- Deep learning models in CV achieve high accuracy in tasks like facial recognition, object detection, and image classification.

Working Of Computer Vision

- Computer Vision focuses on processing and analysing digital images and videos to understand their content.

- Understanding digital images is a fundamental part of computer vision.

Basics of Digital Images

- A digital image is a picture stored as a sequence of numbers that computer can interpret.

- Digital images can be created by several ways such as

- Design software (e.g., Paint, Photoshop)

- Digital cameras

- Scanner

Interpretation of Image in Digital Form

- A computer sees an image as a collection of tiny units called pixels (picture elements).

- Each pixel represents a specific color value. These pixels together form the digital image.

- During digitization, an image is converted into a grid of pixels.

- Resolution of image is determined by number of pixels:

- More pixels → Higher resolution → More detail

- In monochrome (black & white) images: Pixel values range from 0 to 255 where 0 = Black and 255 = White

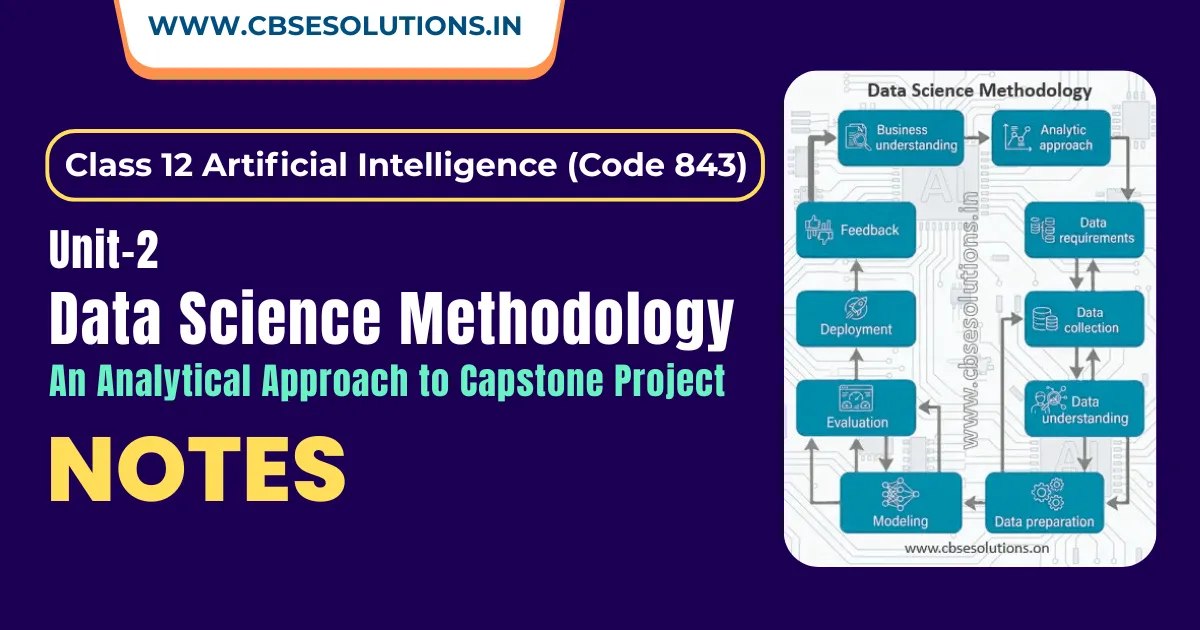

Computer Vision – Process:

Computer Vision process involves five stages which are explained below.

Image Acquisition

- Image Acquisition is the first stage.

- It involves capturing digital images or videos and provides the raw data for further analysis.

- The quality of acquired images affects further processing and analysis.

- Imaging device capabilities and resolution impact image quality.

- Higher resolution captures more details and clearer images.

- Image acquisition is affected by factors like:

- Lighting conditions

- Angle of capture

- In scientific and medical fields, advanced techniques are used. For example: MRI and CT scans

Preprocessing:

- Preprocessing in Computer Vision improves the quality of the acquired image.

- It uses various techniques to make images suitable for analysis.

Common Preprocessing Techniques

- Noise Reduction

- Removes unwanted elements like blurriness, spots, or distortions and makes the image clearer.

- Example: Removing grainy effects in low-light images.

- Image Normalization

- Standardizes pixel values across images. Adjusts values to a fixed range (e.g., 0–1 or -1 to 1).

- Ensures consistency in datasets.

- Example: Converting pixel values from 0–255 to 0–1.

- Resizing/Cropping

- Changes image size or aspect ratio and ensures all images have the same dimensions.

- Example: Resizing images to 224 × 224 pixels.

- Histogram Equalization

- Adjusts brightness and contrast.

- Spreads pixel intensity evenly and enhances details in dark or bright areas.

- Example: Improving low-contrast images.

- Goal of Preprocessing

- Remove noise or disturbances.

- Highlight important features.

- Maintain consistency and uniformity in images.

Feature Extraction

- Feature Extraction involves identifying and extracting important visual patterns or attributes from a pre-processed image.

- The methods used for feature extraction depend on:

- Complexity of the image

- Available computational resources

- Requirements of the application

- Types of Feature Extraction

- Edge Detection

- Identifies boundaries between regions.

- Detects sharp changes in intensity.

- Corner Detection

- Identifies points where two or more edges meet.

- Focuses on areas with high curvature or sharp changes.

- Texture Analysis

- Extracts patterns like smoothness, roughness, or repetition.

- Colour-Based Feature Extraction

- Analyses colour distribution in an image.

- Helps differentiate objects based on colour.

- Edge Detection

- In deep learning approaches, feature extraction is done automatically using Convolutional Neural Networks (CNNs) during training.

Detection/Segmentation

- Detection/Segmentation focuses on identifying objects or regions of interest in an image.

- It is important for applications like:

- Autonomous driving

- Medical imaging

- Object tracking

- This stage is divided into:

- Single Object Tasks

- Multiple Object Tasks

Single Object Tasks

- Focus on analysing or identifying a single object in an image with two main objectives:

- Classification

- Determines the category or class of an object and help identify the object. It can uses algorithms KNN (K-Nearest Neighbour) – supervised or K-means clustering – unsupervised

- Classification + Localization

- Classifies the object and also locates it. It uses bounding boxes to show object position.

Multiple Object Tasks

- Multiple Object Tasks deal with images containing multiple objects or different classes.

- Aim to identify and distinguish between various objects in an image.

Types of Multiple Object Tasks

- Object Detection

- Identifies and locates multiple objects in an image.

- Draws bounding boxes around each object.Assigns class labels to detected objects.Uses features and learning algorithms for recognition.

- Common algorithms: R-CNN, R-FCN, YOLO (You Only Look Once), SSD (Single Shot Detector)

- Image Segmentation

- Image Segmentation creates masks around pixels with similar characteristics.

- It assigns a class to pixels in the input image.

- Helps in understanding the image at a detailed (granular) level.

- Each object is represented using a pixel-wise mask.

- Makes it easier to identify and separate different objects.Uses techniques like edge detection (detecting changes in brightness).

- There are different types of image segmentation available.

- Types of Image Segmentation

- Semantic Segmentation

- Classifies pixels of the same class together.

- Does not differentiate between objects of the same class.

- Instance Segmentation

- Classifies pixels for each individual object.

- Differentiates between objects even if they belong to the same class.

- Semantic Segmentation

- High-Level Processing

- High-Level Processing is the final stage of computer vision.

- It interprets and extracts meaningful information from detected objects or regions in images.

- Helps computers achieve a deeper understanding of visual content.

- Enables informed decision-making based on visual data.

- Tasks include:

- Object recognition

- Scene understanding

- Context analysis

Applications of Computer Vision

- Facial Recognition

- Used by social media platforms to detect and tag users.

- Healthcare

- Detects diseases and abnormalities.

- Helps in identifying cancerous tumours.

- Self-Driving Vehicles

- Understand surroundings using cameras.

- Detect vehicles, objects, traffic signals, and pedestrians.

- Optical Character Recognition (OCR)

- Extracts text from images or documents.

- Used in invoices, bills, and articles.

- Machine Inspection

- Detects defects and flaws in machines or products.

- Helps in quality control during manufacturing.

- 3D Model Building

- Creates 3D models from real-world objects.

- Used in robotics, autonomous driving, 3D tracking, and AR/VR.

- Surveillance

- Uses CCTV footage to monitor activities.

- Identifies suspicious behaviour and prevents crimes.

- Fingerprint Recognition and Biometrics

- Identifies individuals using fingerprints or biometric data.

- Used for authentication and security.

Challenges of Computer Vision

- Reasoning and Analytical Issues

- Requires accurate interpretation, not just image identification.

- Needs strong reasoning and analytical capabilities.

- Without these, extracting meaningful insights becomes difficult.

- Difficulty in Image Acquisition

- Affected by lighting variations, different perspectives, and scales.

- Complex scenes with multiple objects increase difficulty.Occlusion (objects blocking each other) adds complexity.

- High-quality image data is necessary for accurate analysis.

- Privacy and Security Concerns

- Surveillance systems may violate privacy rights.

- Facial recognition raises ethical issues.

- Requires careful handling and regulations.

- Duplicate and False Content

- Risk of fake or misleading images/videos.

- Exploitation of system vulnerabilities by malicious users.Data breaches can spread duplicate or false content.

- Leads to misinformation and reputational damage.

Future of Computer Vision

- Computer Vision has evolved from basic image processing to advanced systems with human-like precision.

- Growth is driven by:

- Advances in deep learning algorithms

- Availability of large amounts of labelled data

- Machines can now perceive and analyse images and videos effectively.

- Future possibilities of computer vision are vast and impactful.

- It will have a significant and far-reaching impact on societ

- Computer vision can bring transformative benefits for humanity.