Understanding Neural Network Notes – Class 12 AI (843) | Exam Ready Notes

Your search for the best notes on Understanding Neural Networks for Class 12 AI (843) ends here!

This Understanding Neural Network notes are designed comprehensively to cover every essential topic— from ‘What is a Neural Network’ to its ‘Impact on society’. Fully updated for the latest CBSE syllabus, everything you need for your Board exam preparation is right here!

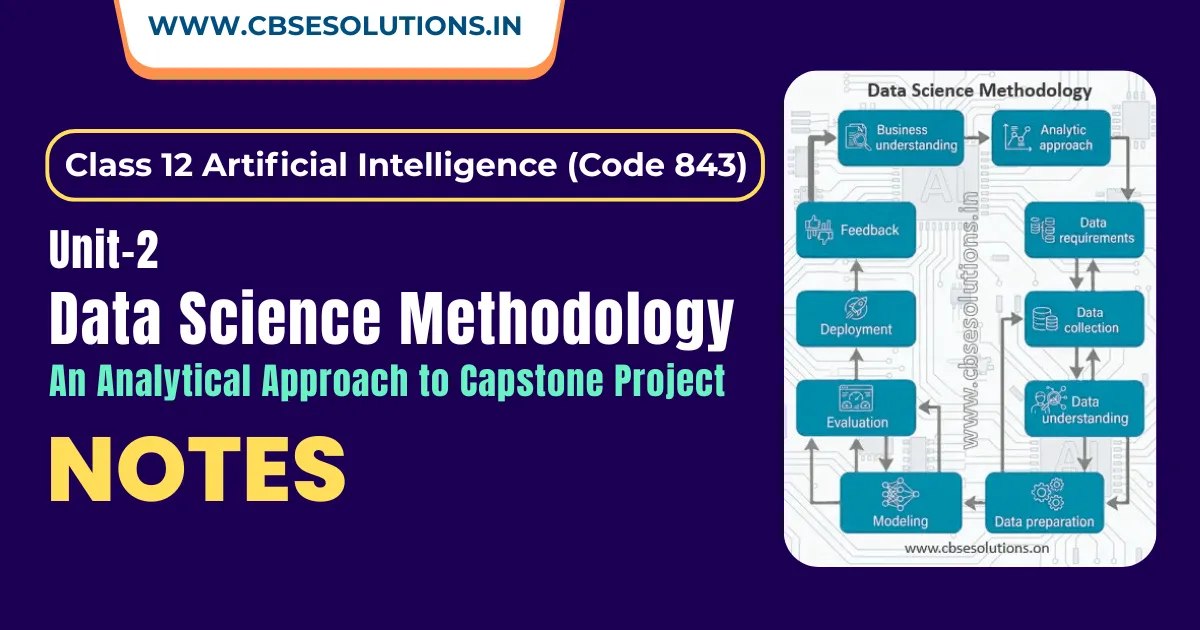

What is a Neural Network?

A neural network is a machine learning model that works in a way similar to the human brain. It uses interconnected artificial neurons to process information, identify patterns, compare different possibilities, and make intelligent decisions or predictions.

- Artificial Intelligence copies the working style of human neurons to create an Artificial Neural Network (ANN).

- Neural networks can automatically extract important features from data without needing direct instructions from the programmer.

- They are commonly used in:

- Chatbots

- Auto-reply emails

- Email reply suggestions

- Spam filtering

- Facebook image tagging

- Online shopping recommendations

- Search engines

Parts of a Neural Network

1. Input Layer

- It receives the input data from the user or dataset.

- Each node in this layer represents a specific feature or attribute of the input data.

2. Hidden Layer(s)

- Hidden layers are placed between the input layer and the output layer.

- Each hidden layer contains artificial neurons (nodes) that process the input data.

- The neurons (nodes) are interconnected, and each connection has an associated weight.

- These weights help the network learn patterns and relationships in the data.

3. Output Layer

- The output layer is the final layer of the neural network.

- It produces the final result, prediction, or decision.

- The number of output nodes depends on the type of problem being solved.

Deep Neural Network and Deep Learning

- An Artificial Neural Network (ANN) having two or more hidden layers is called a Deep Neural Network (DNN).

- The process of training deep neural networks is known as Deep Learning.

- The word “deep” refers to the large number of hidden layers present in the network.

- A neural network with more than three layers (including input and output layers) is generally considered a deep learning model.

- A neural network with only one hidden layer is considered a basic neural network.

Components of a Neural Network

The main components of a neural network are as follows:

1. Neurons (Nodes)

- Neurons are the basic building blocks of a neural network.

- They are also called nodes.

- Neurons receive input from other neurons or external sources.

- Each neuron receives input values, calculates their weighted sum, applies an activation function, and then generates an output.

2. Weights

- Weights represent the strength of connection between neurons.

- Every connection in a neural network has a weight value.

- Weights decide the importance of an input feature in predicting the output.

- During training, the network adjusts the weights to reduce errors and improve accuracy.

3. Activation Functions

- Activation function acts as a decision maker for neuron.

- Activation functions decide whether a neuron should be activated (send a signal) or not.

- Activation functions help neural networks learn complex patterns in data.

- They introduce non-linearity into the model, making it more powerful.

- Common activation functions include: Sigmoid Function, Tanh Function, ReLU (Rectified Linear Unit)

4. Bias

- Bias is a constant value added to the weighted sum before applying the activation function.

- It helps the network make better predictions.

- Bias allows the activation function to shift horizontally.

- It also helps in handling inherent bias present in the data.

5. Connections

- Connections represent the synapses (junction) between neurons.

- Each connection has a weight that determines the strength of influence one neuron has on another neuron.

- Bias values are also associated with neurons to control activation.

6. Learning Rule

- Neural networks learn by adjusting weights and biases.

- The learning rule defines how these adjustments are made during training.

- The goal is to reduce the error in predictions.

- One common learning algorithm is Backpropagation.

7. Propagation Functions

- Propagation functions control how data moves through the network.

Forward Propagation

- In forward propagation, input data moves from the input layer to the output layer.

- Each layer processes the data and computes activations.

- The network produces a predicted output.

- The predicted output is compared with the actual output to calculate error (loss).

Back Propagation

- Backpropagation is the process of reducing the error in a neural network.

- It adjusts the weights and biases based on the error obtained in the previous iteration.

- Backpropagation uses optimization methods such as Gradient Descent to update weights.

- The goal is to improve the network’s prediction accuracy over time.

Working of a Neural Network

A neural network works by processing input data through interconnected neurons (nodes). Each neuron performs simple mathematical calculations and passes the result to the next layer.

Imagine each neuron as a small calculator that performs a series of steps to process information:

- Receives input values

- Multiplies each input by its corresponding weight

- Adds all the weighted inputs together

- Adds a bias value

- Applies an activation function

- Produces an output

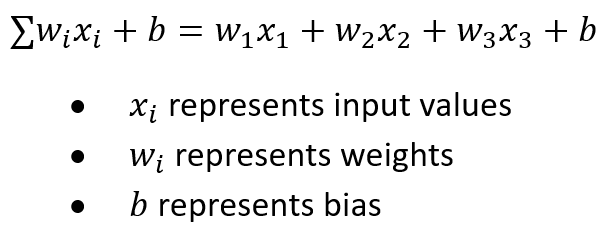

The weighted sum calculated by a neuron can be represented mathematically as:

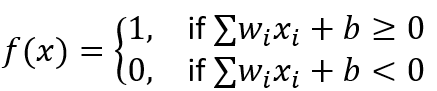

The neuron then applies an activation function to decide whether it should activate (fire) or not.

- If the result is greater than or equal to 0, the neuron produces output 1.

- If the result is less than 0, the neuron produces output 0.

The output of a neuron can also be represented as:

Important Points

- Each input is assigned a weight that shows its importance.

- Inputs with higher weights have greater influence on the output.

- The activation function decides whether the neuron should activate or not.

- Activated neurons pass their output to the next layer.

- Information moves from the input layer to the output layer in one direction, so this type of network is called a Feedforward Neural Network.

The output generated by one neuron becomes the input for the next neuron in the network.

Working Example of a Neural Network

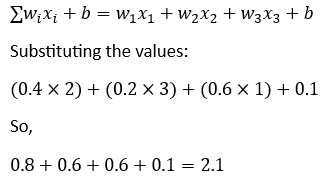

CASE I: Hidden Layer Calculation

Suppose the input features are represented as x1, x2 , and x3.

Input Layer

- Feature x1 = 2

- Feature x2 = 3

- Feature x3 = 1

Hidden Layer Parameters

- Weight w1 = 0.4

- Weight w2 = 0.2

- Weight w3 = 0.6

- Bias b = 0.1

- Threshold = 3.0

The weighted sum is calculated using the formula:

Applying the Threshold

- If Output > Threshold → Output = 1 (Active)

- If Output < Threshold → Output = 0 (Inactive)

Here 2.1 < 3.0, therefore output = 0

This means the neuron in the hidden layer remains inactive.

Types of Neural Network

1. Standard Neural Network (Perceptron)

- The Perceptron is one of the earliest and simplest types of neural networks. It was developed by Frank Rosenblatt in 1958.

- A perceptron consists of: One input layer and One output layer

- Each input node is directly connected to the output node.

- It uses Threshold Logic Units (TLUs) as artificial neurons.

Application

- Spam email detection

- Simple decision-making systems

- Binary classification tasks

2. Feed Forward Neural Network (FFNN)

- A Feed Forward Neural Network (FFNN) is an advanced form of neural network also known as a Multi-Layer Perceptron (MLP).

- It consists of:

- An input layer

- One or more hidden layers

- An output layer

- In FFNN, data moves only in one direction: input layer → hidden layer(s) → output layer

- FFNN uses weights, biases, and activation functions to process information.

Application

- Image recognition

- Natural Language Processing (NLP)

- Regression problems

- Pattern recognition tasks

3. Convolutional Neural Network (CNN)

- A Convolutional Neural Network (CNN) is a type of neural network specially designed for processing images and visual data.

- CNNs use filters (kernels) to extract important features from images.

- These features may include edges, shapes, patterns, and objects.

- Unlike standard neural networks, CNNs process data in a three-dimensional structure, making them suitable for image-related tasks.

Application

- Object detection

- Image recognition

- Style transfer

- Medical image analysis

- Face recognition systems

4. Recurrent Neural Network (RNN)

- A Recurrent Neural Network (RNN) is designed for processing sequential data.

- RNNs contain feedback connections, allowing information to flow in loops.

- If the prediction is incorrect, the network adjusts its weights during backpropagation to improve future predictions.

Application

- Natural Language Processing (NLP)

- Machine translation

- Chatbots

- Speech recognition

- Time-series prediction

- Sentiment analysis

5. Generative Adversarial Network (GAN)

- A Generative Adversarial Network (GAN) consists of two neural networks that work together:

- Generator → Creates new data samples

- Discriminator → Checks whether the generated data is real or fake

- Both networks are trained simultaneously.

- The generator creates new data instances, while the discriminator evaluates them for authenticity.

- GANs are mainly used for unsupervised learning and synthetic data generation.

Application

- Image generation

- Video generation

- Style transfer

- Data augmentation

Future of NN and Its impact on Society

- They improve efficiency and productivity by automating complex tasks.

- Neural networks help in streamlining processes and optimizing resource allocation.

- Neural networks play an important role in the rapid growth of Artificial Intelligence (AI).

- By analysing large amounts of data, they provide personalized products and services.

- They help generate tailored recommendations and user experiences based on individual preferences and needs.

- Neural networks have contributed to economic growth and created new job opportunities in fields like Data Science and Artificial Intelligence.

Challenges and Concerns

- The increasing use of neural networks also raises ethical and social concerns.

- Large-scale data collection may create data privacy issues.

- Algorithmic bias may lead to unfair or inaccurate results.

- Automation through neural networks may result in job displacement in some sectors.